Blue Rock Pigeon Columba livia (photo: JM Garg CC-BY-SA 3.0)

Most people don’t think twice about who reports their CT or MRI scan.

The general public often erroneously assume that it is their own doctor who flips through the hundreds of images, finds the hidden cancer or sneaky life-threatening thrombus and then comes up with a treatment plan.

Either that or they ascribe the radiographer (they that take the pictures) with undertaking the important but separate task of image interpretation.

Sadly, the humble radiologist is often forgotten, consigned to a darkened room and left to his or her own devices, invisible to patients and staff alike. This is despite the fact that the average cancer patient will have six scans during their disease course, and that annual numbers of medical imaging tests are increasing exponentially worldwide.

There is also a severe national shortage of radiologists. In fact, there are fewer radiologists in the UK than there are endangered tigers in the wild. This invisible workforce crisis is barely a blip on the radar in the medical world, but one that is increasingly felt in radiology departments up and down the country. Rapid increase in demand versus a limited specialist workforce is a recipe for disaster.

Finding the dancing gorilla

Unhelpfully, recent research has shown that radiologists apparently aren’t even very good at their job anyway. A paper in Psychological Science found that 20 out of 24 radiologists can’t even find a picture of a dancing gorilla embedded into a lung scan.

A group from California also published the perturbing news that pigeons can be trained to find breast cancer in mammograms with roughly the same accuracy as humans “thus avoiding the need to recruit, pay and retain clinicians as subjects for relatively mundane tasks”. I’m not sure if breast cancer patients would find comfort in either a pigeon reading their scans or the discovery of their cancer being described as ‘mundane’.

Clearly no-one is actually going to try to train pigeons to find gorillas any time soon, but there is alternative hope on the horizon, according to the Silicon Valley prophets.

They espouse a recent trend in medical technology from start-ups and investment pioneers that are incubating the next revolution in radiology. According to these futurists, deep-learning, pattern-recognising artificially intelligent systems will process huge volumes of medical imaging data and provide detailed written reports. All hail our sentient overlords!

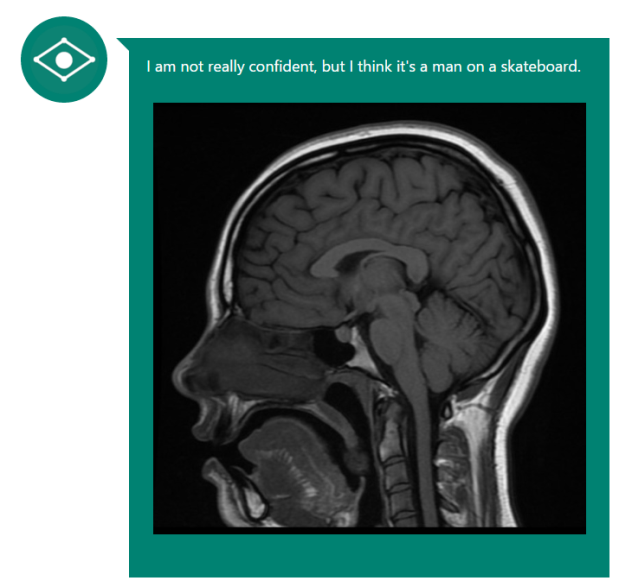

'Man on a skateboard'

Early initial results weren’t promising, however. When Microsoft’s image analysis tool was shown a brain MRI, it answered “I’m not really confident, but I think it’s a man on a skateboard” (Fig 1, below). Close, but no cigar. IBM’s Watson is reportedly doing better; if given enough relevant background information it can correctly diagnose a lung cancer in 90% of cases.

Of course, not every hospital has the luxury of having its own supercomputer to hand.

Figure 1: Microsoft's CaptionBot seemed to be stuck in the 90s.

There is also an important difference between a computer finding a cancer and a human radiologist describing the exact location, anatomical boundaries and invasive nature of a cancer. One is a binary output (cancer versus no cancer), the other is a useful and nuanced medical opinion on how aggressive a cancer is.

The clinical context

This is why radiologists are still currently needed – to put a cancer into a relevant clinical context, and to find other things on the scan that aren’t cancer, not to mention the current legal requirement to have a human sign off the report! (There is also the small matter of the need to interpret the illegible scrawl that is so often seen on the referral request to the imaging department. Many man-hours have been wasted in deciphering what it is exactly a clinician wants the radiologist to find…).

One of the many hurdles to overcome on the road to digitised nirvana is teaching computers to ‘see’ what humans cannot. Part of my work at The Institute of Cancer Research, London, is to study how numerical data obtained from MRI scans can be used to detect and grade prostate cancer.

The concept is rather elegant – by looking at the MRI data not as an image but as a set of data points we can deduce the presence of a tumour simply by trawling through the quantitation of certain parameters. The anatomy and background details of the patient don’t matter; it’s a pure numbers game.

Here comes the science bit: using well-established MRI techniques called diffusion-weighted imaging (DWI) and dynamic contrast enhanced (DCE) sequences, one can obtain a map of numbers that represent the underlying function of the tissues being scanned.

A number's game

DWI tells us how much water movement there is (less water movement occurs in tumours than in normal tissue) and DCE measures the blood supply to a region (tumours tend to grow their own chaotic blood supply). By applying numbers to these functional concepts you can theoretically detect a tumour without even having to look at analogue images of the prostate.

If a cluster of data points have significantly lower water movement and high blood flow, then the likelihood of there being a tumour in this region is high. Even better, the numbers can potentially tell you how aggressive a cancer is – a very low amount of water movement or higher than average blood flow could indicate a more sinister tumour.

The silicon evangelists are thus, in part, correct: in the not-too-distant future computers may well be making initial reads on medical images, and alerting humans to the ones they think need more attention.

A revolution in waiting

To be able to process the negative scans rapidly and accurately, and leave the positive scans to the dwindling number of human radiologists, would be a medical breakthrough of the highest order. Combine this processing of 1s and 0s with the radiologist’s keen eye on the standard anatomical images, and you have the potential to powerfully augment cancer diagnosis, and help solve the radiology workforce crisis in one fell swoop.

There is one important caveat though: sadly, this technique is absolutely hopeless at finding the often fatal dancing gorilla in the lungs. Maybe we should train some pigeons to take care of that?

This article was a joint winner of the Professor Mel Greaves Science Writers of the Year 2016, originally presented at the ICR annual conference in June 2016.

Dr Hugh Harvey is a Clinical Research Fellow at the ICR.

comments powered by